When only 10–20 µL of sample is available, the core challenge is not just analytical sensitivity—it is sample allocation. In low-volume cytokine studies, every decision to add duplicates, matrix-matched controls, or reruns trades directly against panel breadth, future confirmatory work, and sometimes against other downstream assays competing for the same specimen. Luminex is often a strong fit because it compresses many analytes into a single multiplex well, but it does not remove the need to predefine how scarce sample will be spent across the initial run, essential controls, and a tightly limited rerun reserve.

Who This Resource Is For

Typical study scenarios

- Human serum or plasma biomarker studies with highly limited residual volume

- Mouse or small-animal studies with low-volume longitudinal sampling

- Precious clinical cohorts where repeat collection is difficult or impossible

- Early-stage translational studies balancing panel breadth against sample preservation

Key questions addressed on this page

- How should replicates be planned when only 10–20 µL of sample is available?

- Which controls are essential, and which can be streamlined under tight volume constraints?

- When should re-runs be allowed, and when should they be avoided?

- Why is Luminex especially useful for low-volume cytokine profiling?

Why Low Sample Volume Changes Cytokine Study Design

The core constraint

With 10–20 µL samples, every replicate, control, and rerun consumes meaningful study material. That makes "standard habits" (like duplicating everything or rerunning reflexively) hard to sustain, because volume spent in one place can't be recovered later. In practice, low sample volume cytokine assay planning becomes a balancing act between defensible confidence and irreversible sample use.

Why Luminex is especially useful for low-volume cytokine profiling

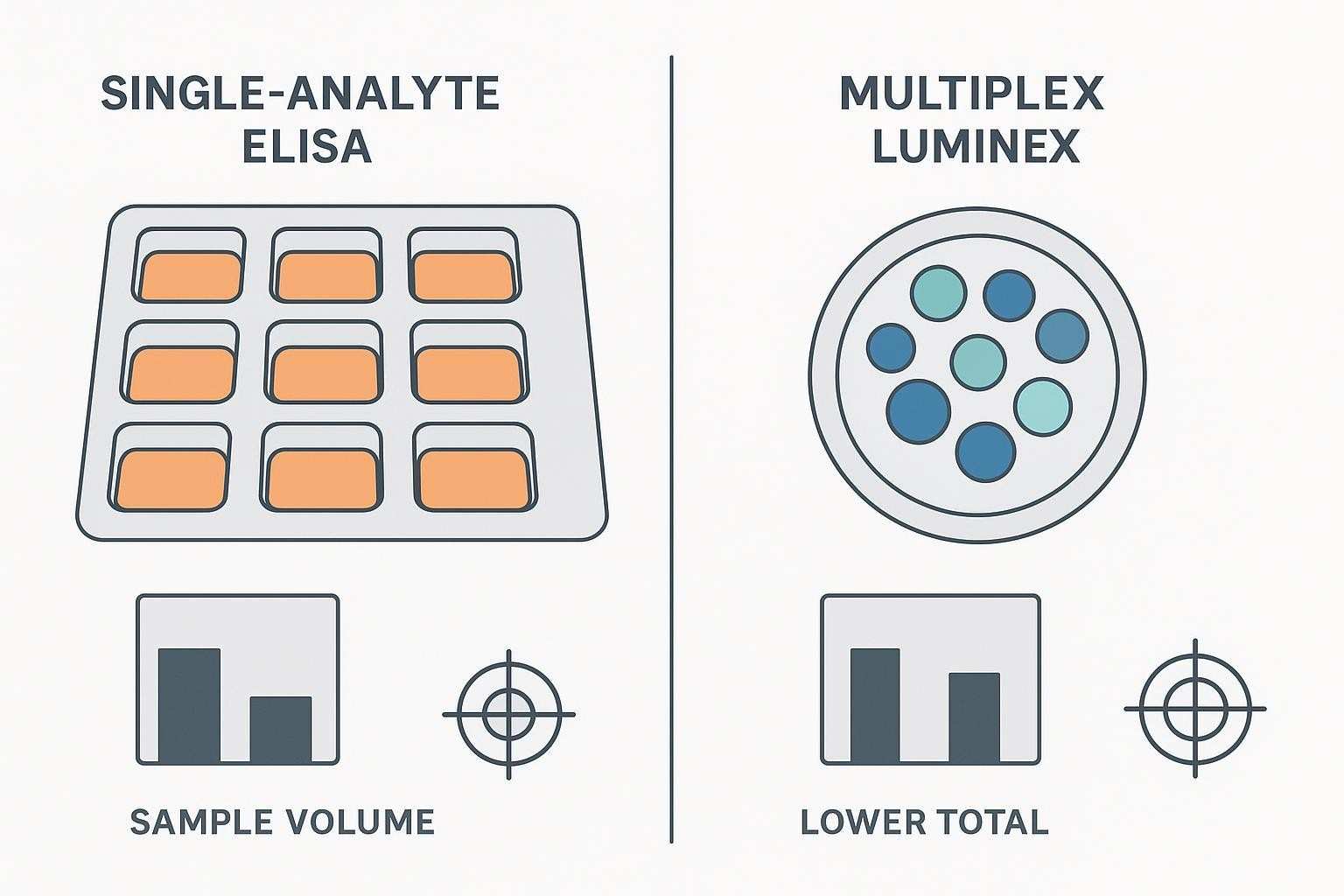

Traditional single-analyte ELISA workflows become inefficient quickly when residual sample is scarce, because each marker draws on a separate share of limited material. Luminex reduces that burden by measuring many analytes in one multiplex well, which can preserve far more decision value per unit of sample. Even so, low-volume feasibility should be described carefully: kit-specific workflows often require a defined volume of diluted sample per well, and the true original-sample demand depends on matrix, dilution factor, preparation dead volume, and whether duplicates or reruns are reserved in advance. For that reason, the main advantage of Luminex in low-volume studies is not simply lower per-marker sample use, but greater flexibility to allocate scarce material across analyte breadth, essential controls, and a tightly limited rerun reserve.

Why this matters in real projects

Low-volume studies punish "extra" work that doesn't change the decision. Too many replicates can force you to drop analytes or eliminate any rerun option. Too few or poorly chosen controls can leave you unable to defend data quality when results are questioned. And ad hoc reruns often consume the last remaining material without resolving the core issue. In the end, sample allocation choices directly determine reportability and interpretability.

Common settings where this problem appears

This design tension shows up most often in pediatric or rare disease cohorts, small-animal or serial bleed studies with low recovery volumes, biobank remnants with strict use limits, and high-value longitudinal samples that also need to support other downstream assays.

| Platform | Typical per-marker sample use | Analytes per well | Practical outcome with scarce sample |

|---|---|---|---|

| Single-analyte ELISA | 50–100 µL per marker (per test) | 1 | Broad profiling becomes impractical; rapid sample exhaustion |

| Luminex multiplex | 12.5–25 µL diluted sample per well (many cytokines) | 10–50+ (panel dependent) | Micro-volume feasible; better breadth and reserve for replicates/controls |

What Makes 10–20 µL Studies Different from Standard Volume Projects

Sample volume is no longer a technical detail

At 10–20 µL, volume planning becomes part of assay design—not something you can "figure out at the bench." Panel breadth, replicate strategy, and contingency volume for selective reruns are coupled decisions, and the trade-offs should be made explicit before the first plate is run.

The cost of routine assumptions increases

Many default habits are suddenly expensive. Duplicates may no longer be the automatic best choice if they erase your ability to rerun only what truly matters. Likewise, piling on extra control layers can reduce study coverage without increasing actionability. Even a well-intentioned rerun can be counterproductive if it consumes the last usable volume while leaving the interpretation unchanged.

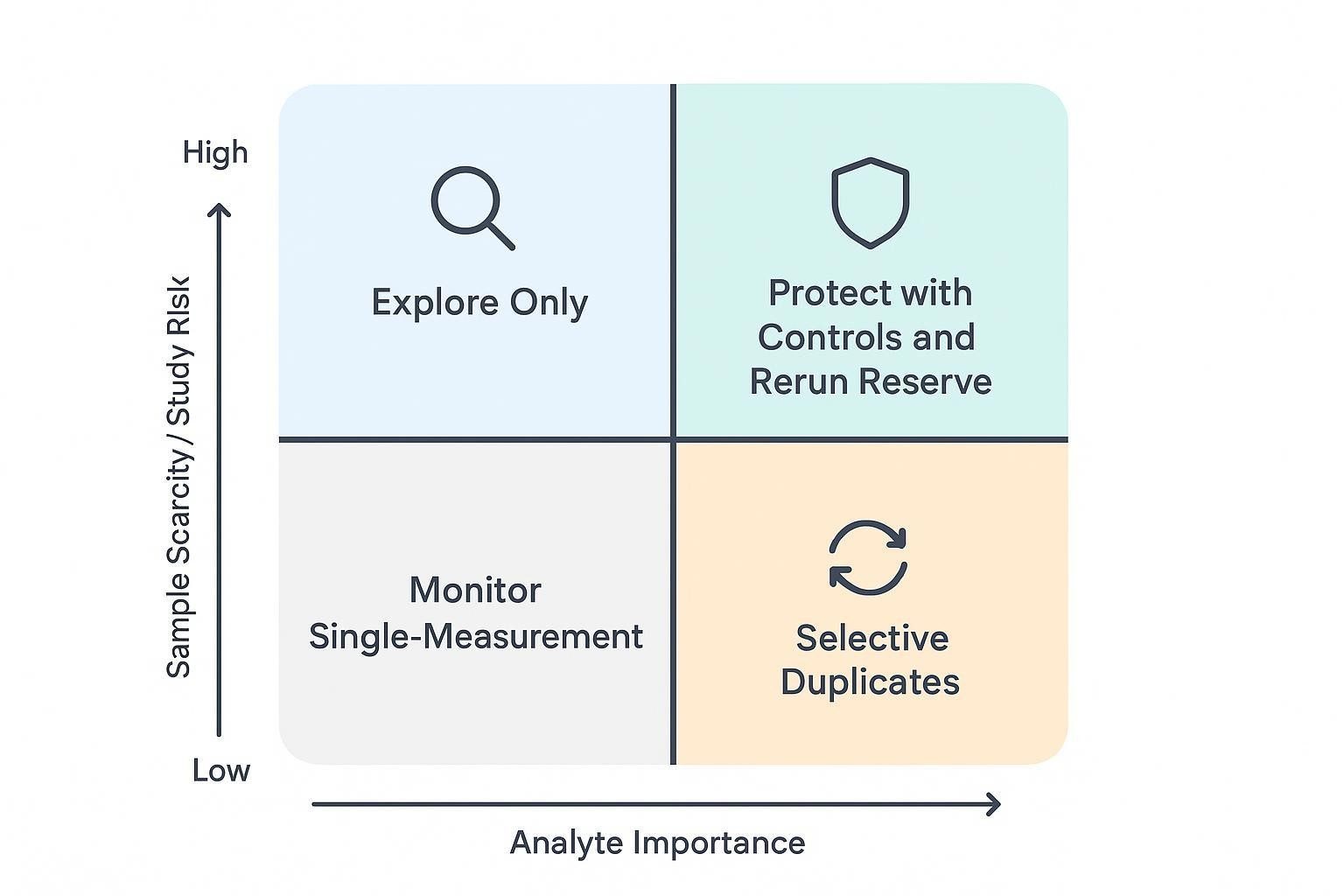

Fit-for-purpose planning becomes more important

The practical consequence is that you have to match rigor to purpose. Some analytes warrant decision-grade confidence; others can be treated as exploratory. Some samples may justify reruns under clear technical triggers; others should be "one and done." The study objective—not habit—should decide where limited volume is spent.

For context on multiplex feasibility and run acceptance logic (e.g., QC ranges, bead count considerations), consult authoritative vendor and proficiency resources such as EQAPOL's multi‑site proficiency reports and vendor QC documentation.

What Should Be Decided Before the First Sample Is Run

Study objective and decision threshold

Before you touch a plate map, agree on what "good enough" looks like for this project. Are you doing exploratory profiling, or do you need decision-grade biomarker outputs? Is the goal to detect broad signals, or to quantify a smaller set of markers with high confidence? The answers determine which results will be allowed to drive study conclusions—and therefore where limited volume should be spent.

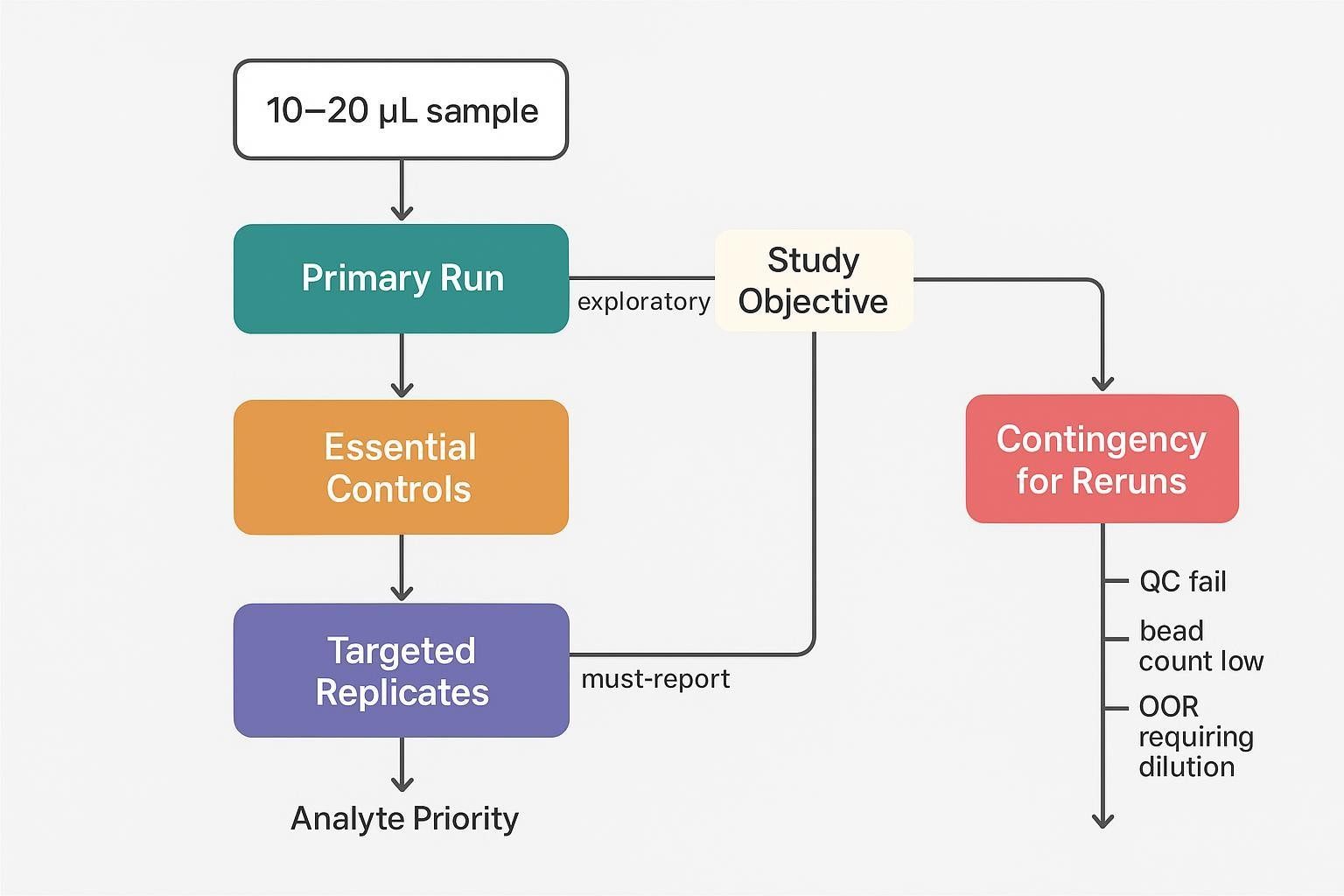

Sample allocation priorities

A practical way to stay disciplined is to treat sample volume like a budget with distinct line items. You typically need volume for the primary run, any technical replicates that are truly justified, required controls, and a reserved contingency for selective reruns. If samples must also support other downstream assays, decide up front what volume is protected for those workflows so the cytokine panel doesn't silently consume it.

Analyte prioritization

Not every analyte needs the same standard of completeness or precision. Define tiers (for example: must-report, important but secondary, and exploratory). Also flag targets that are likely to be problematic—very low abundance cytokines near the assay's lower limit, or analytes known to be sensitive to matrix effects—so you can plan dilution checks or selective duplication where it matters.

Operational assumptions

Finally, write down the operational rules that will control sample burn. What failure modes do you realistically expect (low bead count, QC out of range, out-of-range results that might be fixed by a dilution change)? What level of missingness is acceptable? Under what technical triggers will a rerun be permitted—and how many attempts are allowed? In low-volume Luminex cytokine profiling, these assumptions are not paperwork; they're what prevents accidental depletion of irreplaceable specimens.

How to Plan Replicates Under Tight Sample Constraints

When technical replicates add real value

- Confirming consistency for decision-critical analytes

- Supporting higher-confidence datasets in confirmatory studies

- Detecting assay-level variability when sample count is small but conclusions are high-stakes

When replicates may not be the best use of sample

- Exploratory studies prioritizing analyte breadth over per-sample precision

- Cases where duplicate testing would eliminate rerun reserve

- Situations where control strategy contributes more to data confidence than universal replicates

Practical replicate models for low-volume studies

- Duplicate all samples for a small, decision-critical panel

- Single measurement for all samples plus duplicates for sentinel samples

- Single measurement for all samples with duplicate testing limited to bridge or QC samples

- Stage-based design where exploratory findings are followed by focused confirmation

Questions to ask before committing to duplicates

- Will duplicates materially change the study decision?

- Which analytes are too important to leave without redundancy?

- Is the sample volume better spent on broader coverage or higher per-sample confidence?

- How much rerun flexibility would be lost if duplicates are used universally?

For a practical overview of multiplex options that conserve volume, see Luminex Multiplex Cytokine Panel Service and the serum/plasma-specific overview in Serum & Plasma Luminex Cytokine Assay.

How to Choose the Right Controls When Volume Is Limited

Controls that usually remain essential

- Assay-level controls needed to judge run validity

- Matrix-relevant controls that help interpret low-signal or variable performance

- Bridge or pooled controls when cross-batch comparability matters

Controls that require careful scoping

- Excessive control layers that consume volume without improving actionability

- Controls copied from standard protocols without fit-for-purpose adjustment

- Redundant controls that do not match the study's likely failure modes

Building a control strategy around study risk

- Focus on controls that reveal the most likely technical problems

- Use pooled or shared materials where possible

- Align control placement with batch structure and reporting needs

- Define in advance how control outcomes will affect acceptance or rerun decisions

Low-volume control planning questions

- Which controls are required for assay validity?

- Which controls are most informative for this matrix and analyte set?

- Can pooled controls replace repeated use of scarce individual material?

- How much control burden can the study absorb without compromising sample coverage?

When selecting species-specific panels that match your matrix and objectives, review options such as Mouse Multiplex Cytokine Panel Service and disease-focused offerings like Mouse Inflammatory Panel Service.

When Re-runs Should Be Planned, Limited, or Avoided

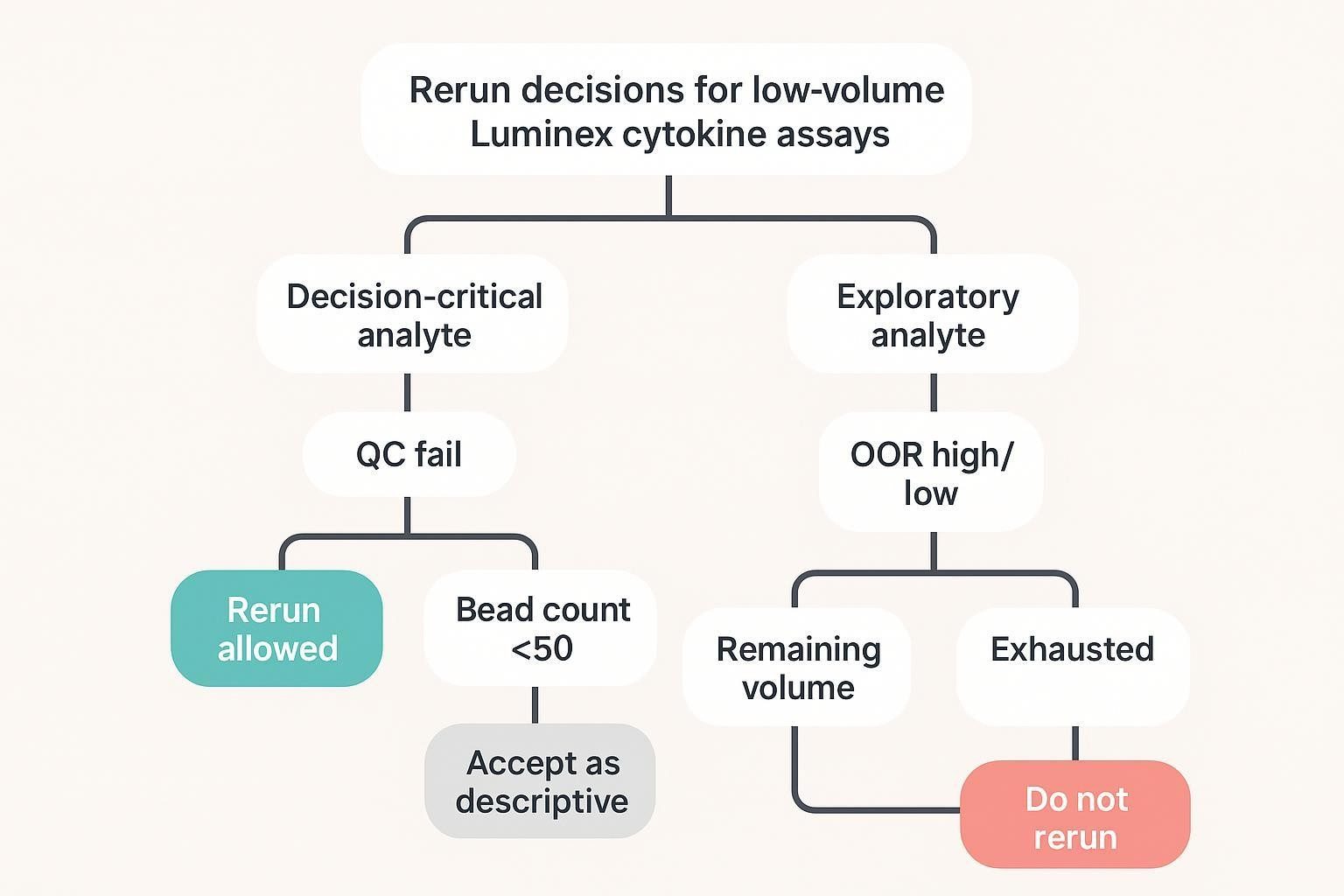

Why reruns must be predefined in low-volume studies

- Reruns should not be treated as unlimited troubleshooting

- Volume consumed in avoidable reruns may be impossible to replace

- Rerun policy affects how initial sample allocation should be structured

Situations where reruns may be justified

- Decision-critical analytes fail because of clear technical error

- Samples fall outside expected range in a way that could be resolved by adjusted dilution

- Control performance indicates a run-level issue affecting interpretability

Situations where reruns may not be worth the sample

- Exploratory analytes with limited decision impact

- Marginal signal issues unlikely to improve meaningfully

- Cases where rerun would consume the last usable volume without changing the conclusion

How to create a rerun policy before study launch

- Define which analytes qualify for rerun

- Define which technical triggers permit rerun

- Set limits on rerun attempts per sample

- Reserve contingency volume only where it supports the study objective

For custom panel design and acceptance logic (replicates, controls, rerun triggers) aligned to your objective, see Luminex Multiplex Assay Customization .

How Panel Breadth Affects Low-Volume Study Design

Broader panels increase pressure on sample planning

- More analytes do not always translate to better study decisions

- Larger panels may increase the chance of mixed performance across targets

- Panel size can indirectly increase rerun pressure if expectations are not aligned

When a narrower panel is the better choice

- The study has a clear set of decision-driving markers

- Sample scarcity makes universal exploratory coverage impractical

- The cost of marginal analytes is loss of confidence in core outputs

How to think about breadth versus reportability

- A broader panel may generate more signals but lower per-analyte confidence

- A narrower panel may improve reportability and data consistency

- The right balance depends on whether the study is exploratory, translational, or confirmatory

Designing for Limited Sample Without Sacrificing Data Quality

Protect the most decision-relevant analytes

- Prioritize markers tied directly to the biological or clinical question

- Avoid letting low-value analytes consume replicate or rerun budget

- Consider whether split logic or panel refinement is needed

Match rigor to study purpose

- Exploratory studies may accept single measurements and selective reruns

- Confirmatory studies may require stricter replicate and control planning

- Longitudinal, validation-oriented, or decision-grade studies may justify tighter control structures even under volume pressure.

Build transparency into the design

- Predefine how missing values will be handled

- Separate decision-grade from descriptive outputs where needed

- Document the rationale for replicate, control, and rerun choices

How Pilot Work Should Inform the Final Design

What pilot work should test first

- Real sample behavior in the intended matrix

- Whether key analytes are consistently detectable

- Whether duplicates improve confidence enough to justify the extra volume

- Whether likely rerun scenarios can be anticipated and controlled

What pilot results should answer

- Which analytes are robust under low-volume conditions

- Which controls are most informative

- Which samples or analytes are likely to trigger reruns

- Whether the proposed study design protects the main decision question

Common pilot mistakes in low-volume studies

- Using non-representative samples

- Testing ideal conditions rather than realistic constraints

- Assuming standard duplicate logic is always optimal

- Failing to quantify the opportunity cost of reruns

Common Mistakes in Low-Volume Cytokine Profiling

Treating duplicates as automatic

- Duplicate testing is not always the best default under extreme volume constraints

- Universal duplicates may reduce study flexibility without proportional gain

Overbuilding the control scheme

- More controls do not always create better data

- Control design should reflect actual study risk, not habit

Leaving rerun logic undefined

- Ad hoc reruns can deplete valuable samples quickly

- Rerun policy should be tied to analyte importance and technical criteria

Trying to preserve every analyte equally

- Not every marker deserves the same use of limited volume

- Design decisions should reflect study value, not panel symmetry

Separating assay setup from reporting policy

- Low-volume design directly affects missingness, comparability, and interpretability

- Reporting expectations should shape study planning from the start

A Practical Planning Framework for 10–20 µL Studies

Step 1: Define the study goal

- Discovery

- Translational prioritization

- Mechanistic readout

- Decision support

- Longitudinal monitoring

Step 2: Rank analytes by importance

- Must-report

- Important but secondary

- Exploratory

Step 3: Decide where sample volume creates the highest value

- Initial run coverage

- Replicate support for critical markers

- Essential controls

- Targeted rerun reserve

Step 4: Choose the least risky design

- Broader panel with minimal redundancy

- Narrower panel with more robust control

- Selective duplicates and selective reruns

- Staged strategy from broad screen to focused confirmation

Step 5: Predefine reporting rules

- Missing value handling

- Conditions for rerun

- Criteria for decision-grade versus descriptive outputs

- Acceptable limits of incompleteness

A worked allocation example for a 20-sample study with ~10–20 µL originals is below. Think of it as a starting template to adapt in pilot work.

| Allocation element | Example setup (single 96-well plate; multiplex panel) | Notes |

|---|---|---|

| Primary run | 20 samples × single measurement | Use a predefined dilution strategy that generates adequate working volume without exhausting originals |

| Decision-critical duplicates | 4 sentinel samples × duplicate wells | Apply duplication only where added precision is expected to change interpretation |

| Controls | Standards and vendor QCs per kit SOP, plus blank and pooled/bridge control where justified by batch design | Keep assay-validity controls fixed; streamline only the optional layers |

| Rerun reserve | Hold minimal volume for samples meeting predefined triggers | Example triggers: QC failure, bead count below the predefined minimum acceptance threshold for the assay/SOP, or out-of-range results plausibly resolvable by dilution adjustment |

Pre-Study Checklist

Scientific checklist

- Define the study question

- Rank markers by decision value

- Estimate expected concentration behavior

- Decide whether breadth or confidence matters more

Analytical checklist

- Confirm feasibility in the intended matrix

- Decide replicate strategy

- Finalize essential controls

- Define rerun triggers and rerun limits

Operational checklist

- Calculate total sample allocation

- Reserve contingency volume where justified

- Align design with batch structure

- Document assumptions before launch

FAQ

Are duplicates always necessary with only 10–20 µL samples?

Not always. Use selective duplicates tied to decision-critical analytes or sentinel samples, and protect a small rerun reserve so you can resolve true technical failures.

Should controls be reduced when sample is very limited?

Yes—but only selectively. Keep assay-validity controls and the controls most relevant to the study's likely failure modes. Streamline redundant or low-information control layers, especially when they add operational burden without changing acceptance or rerun decisions.

How much sample should be reserved for reruns?

Only enough to protect decision-critical outcomes under predefined triggers (e.g., failed QC, low bead counts, or out-of-range results fixable by dilution changes).

Is a smaller panel better for low-volume samples?

Often yes—when narrowing improves confidence and reportability of priority markers. Exploratory screens can be staged ahead of confirmatory panels to balance breadth and depth.

Can a low sample volume cytokine assay still deliver reliable results?

Yes—provided that feasibility is demonstrated in the intended matrix and the study predefines where scarce volume will be spent on selective replication, essential controls, and limited reruns.

References:

- Rountree W et al. Sources of variability in Luminex bead-based cytokine assays: evidence from twelve years of multi-site proficiency testing. J Immunol Methods. 2024. Available via PubMed: https://pubmed.ncbi.nlm.nih.gov/38823575/ and full text: https://pmc.ncbi.nlm.nih.gov/articles/PMC11246216/

- Kelley M et al. Large Molecule Run Acceptance: Recommendation for Best Practices. AAPS J. 2014. PubMed: https://pubmed.ncbi.nlm.nih.gov/24492909/ Full text: https://pmc.ncbi.nlm.nih.gov/articles/PMC3933574/

- Bio‑Rad. Bio‑Plex Pro Human Cytokine Assays — Manual (typical 12.5 µL/well and matrix guidance). 2023. https://www.bio-rad.com/webroot/web/pdf/lsr/literature/10000092045.pdf

- MilliporeSigma. MILLIPLEX Multiplex Assays — Equipment Settings & Data Analysis (bead count guidance). 2022. https://www.sigmaaldrich.com/US/en/technical-documents/product-supporting/milliplex/equipment-settings-data-analysis-milliplex-multiplex-assays

- Andreasson U et al. A Practical Guide to Immunoassay Method Validation. Bioanalysis. 2015. Full text: https://pmc.ncbi.nlm.nih.gov/articles/PMC4541289/